Lessons from The Mirage of AI Terms of Use”

With an aim to analyze the trends of generative AI, the legal scholars, Mark A. Lemley and Peter Henderson in their influential paper, “The Mirage of Artificial Intelligence Terms of Use Restrictions,” argue that the current reliance on terms of service (ToS) to restrict the use of AI models and their outputs is both legally tenuous and practically ineffective. Where they not only shed light on the present case laws but also forecasted the possible future lawsuits and the lawsuits which are already in process are dealing with violation of copyrights.

The analysis raises significant questions about how Generative AI firms seek to control their technologies—and whether these methods stand up under legal scrutiny. The paper underscore the terms of use of various models and inquires, which are in the context of the US legal landscape, whether the output-based restrictions contained in the terms of use are whether subject to enforcement.

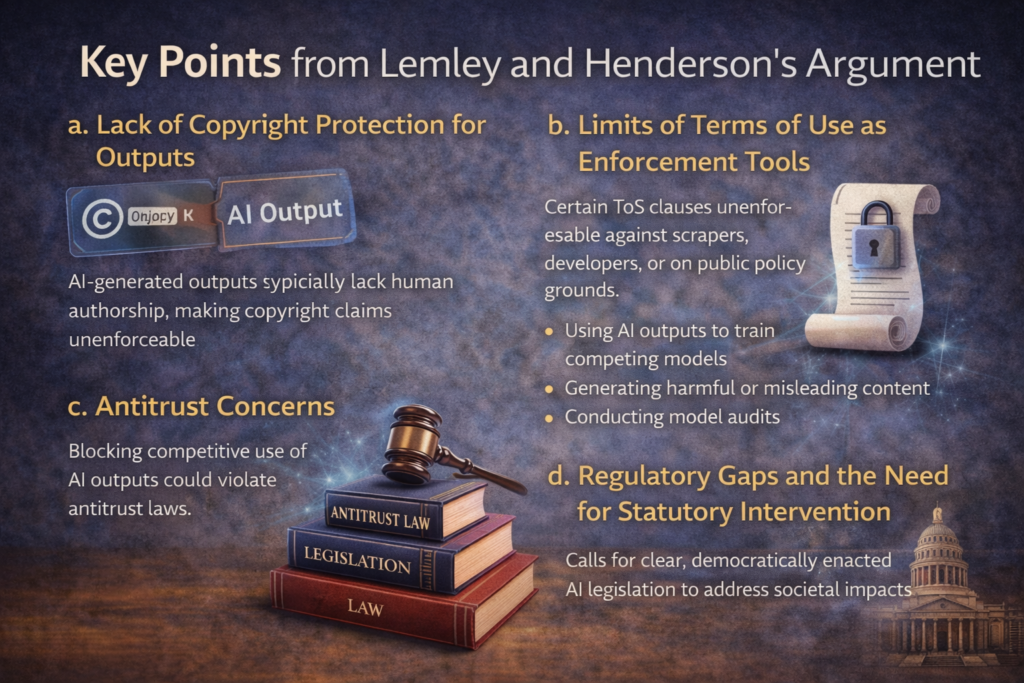

Key Points from Lemley and Henderson’s Argument

a. Lack of Copyright Protection for Outputs

The authors contend that AI-generated outputs are typically not subject to copyright protection under existing U.S. law, as they lack human authorship. As a result, any attempt by AI providers to license these outputs or impose use restrictions through intellectual property claims may lack legal standing.

b. Limits of Terms of Use as Enforcement Tools

Lemley and Henderson criticize the reliance on ToS to govern AI usage, especially clauses that prohibit certain activities like:

- Using AI outputs to train competing models.

- Generating harmful or misleading content.

- Conducting model audits or research.

They pointed that these terms, while binding on users who click “accept,” are unenforceable against those who never agreed to them (such as scrapers or downstream developers). Moreover, many ToS provisions may be overbroad or going against public policy, raising enforceability challenges and issues in court.

c. Antitrust Concerns

Restricting users from creating competitive models using publicly available AI outputs may constitute anti-competitive behavior. This could invite scrutiny under U.S. antitrust laws, particularly if dominant AI providers use these terms to block open-source or independent development.

d. Regulatory Gaps and the Need for Statutory Intervention

The authors who conclude that private contracts (like ToS) are an insufficient tool for regulating the societal impact of AI. The emphasis for clear, democratically enacted legislation that defines acceptable utilization of AI and enforces transparency, rather than relying on opaque and untested private agreements.

However, the terms of use for Generative AI companies make for an interesting read.

The terms of use clarify the ownership of the output generated and how such output whether or not be used.

For anyone following the barrage of lawsuits filed against these companies, the prominent affirmative defense raised against the allegation of ‘direct copyright infringement’ has been no prize for guessing the obvious – “Fair Use.”

For instance, in Concord Music Group v. Anthropic, Anthropic, creator of Claude has argued that using copyrighted song lyrics to train an AI model is ‘transformative’ use since the lyrics are not being used for the same end for which they were created. Rather, it is being used to break the lyrics into small tokens to derive statistical weightage.

Fair use, as a defense, is also being argued in

Thomas Reuters v. ROSS Intelligence,

New York Times Company v. Microsoft Corporation.

So, GenAI companies argue that they can use copyrighted work, without permission, to train their AI model because it is covered by fair use.

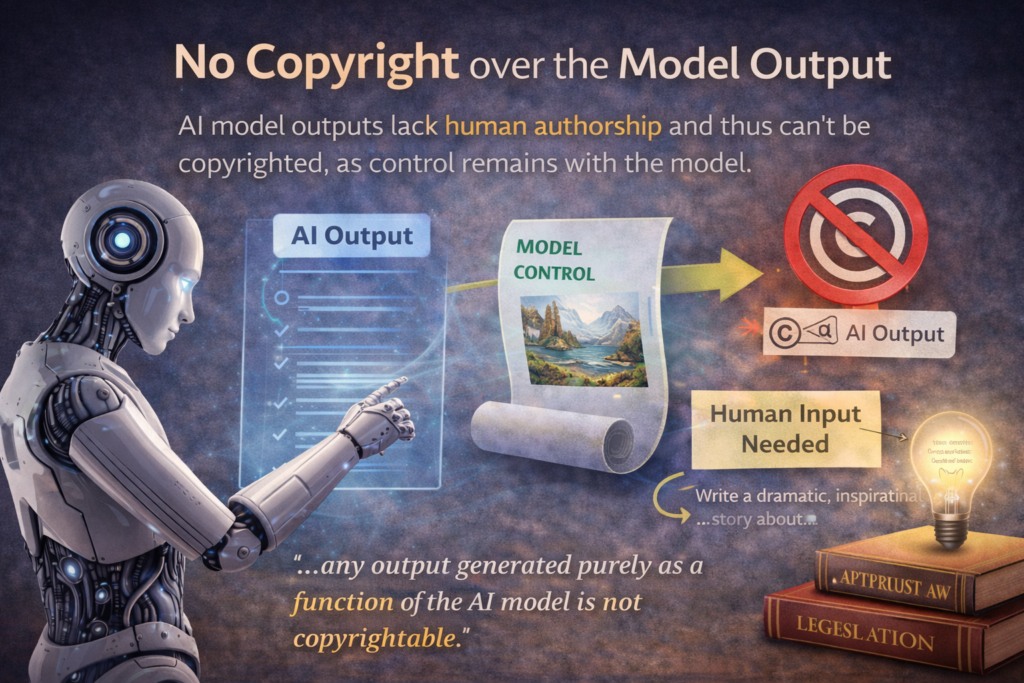

No copyright over the Model Output

The authors, main, focus is on the question of the copyrightability of the output generated by an AI model. Relying on US Copyright Office guidelines, the authors argue that model output is not copyrightable since it lacks sufficient human authorship or creative input. The ultimate creative control remains with the model.

In one of his papers, Lemley, argues that model output could be copyrighted if the user engages in prompt engineering, demonstrating the creative intervention of a human author. Even then, the model creator does not get copyright over the output. Therefore, at the conclusion it has been stated “any output generated purely as a function of the AI model is not copyrightable.”

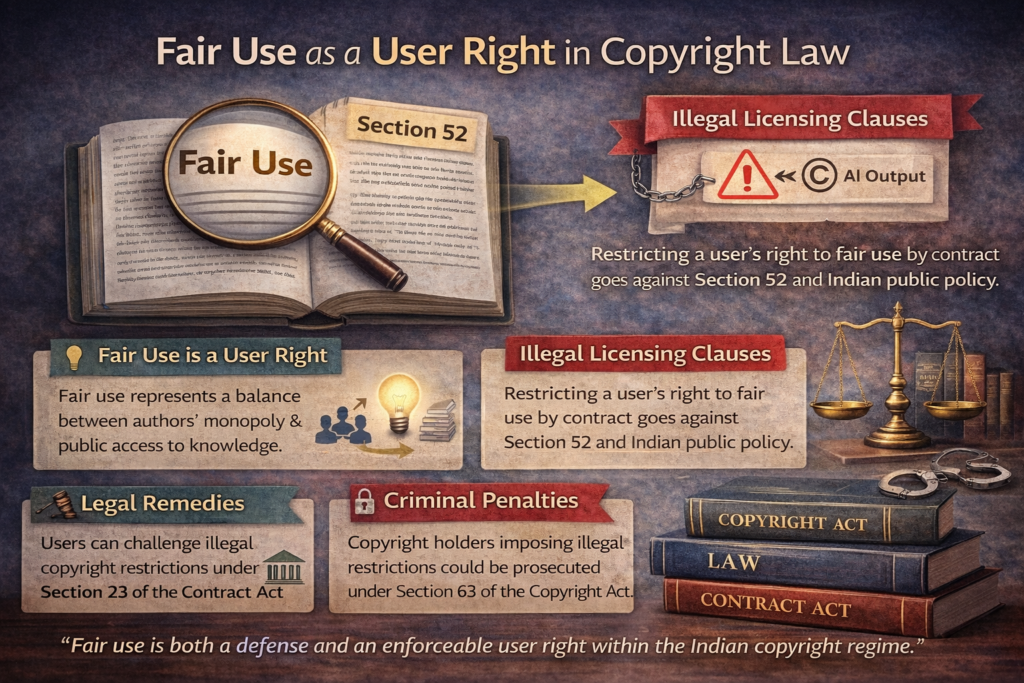

Extending Monopoly under Copyright Law

The DHC had said that if a copyright holder imposes restrictive conditions which prohibits someone from doing what is permitted under section 52 (fair use), the same would be illegal and not enforceable. Further, if such illegal conditions are being imposed, a person could pursue independent legal action against the copyright owner to prevent such misuse of copyright. Th fair use of provision, rather than just being a defense to infringement, is also a user right under copyright act. They argue that there is a utilitarian rationale underlying the copyright regime. As a result, the right of fair dealing represents the careful balance between ensuring author’s monopoly as well as dissemination of information and public access to knowledge. Thus, they argue, any contractual or licensing covenant which restrict a user’s right of fair use will likely be held to be void under Section 23 under the Indian Contract Act for being contrary to public policy. Further, such owners imposing these illegal covenants could also be prosecuted under section 63 of the Copyright Act.

Broader Implications

- For AI developers: The paper underscores the importance of crafting more legally sound and ethically robust governance mechanisms.

- For lawmakers: It calls for public regulation that balances innovation with accountability.

- For users and researchers: It affirms the legal ambiguity of many current restrictions and highlights the importance of challenging overreach in private licensing.

Conclusion : The issue of AI governance away from private control toward public law, challenging assumptions about licensing, enforcement, and platform power. For students and practitioners, it underscores a critical lesson Contracts may shape platforms—but they cannot replace law.